The other day, I was asked to make an Excel spreadsheet that was populated by a SQL stored procedure parameterized. This spreadsheet is supposed to run each month to analyze the previous month’s sales and commissions. I wrote a stored procedure that automatically pulled the previous month’s data. Now, they wanted to specify the Start Date and End Date.

I searched all over the internet on how to do this, and while I am not sure that my solution is the most efficient, I decided to write this up to help anyone who might have this problem, or if there is a better way to do it.

The report is called Monthly Financial Analysis and pulls data from an SQL database stored procedure I wrote called usp_GetEndOfMonthSalesMaster. This stored procedure will accept a Start Date and an End Date, but if not supplied, automatically selects the previous month’s data.

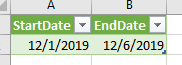

In the Excel spreadsheet, I created on Query that called the stored procedure. To get the parameters, I created a second sheet that only contains the parameters. I created a named Table that encompassed the parameters.

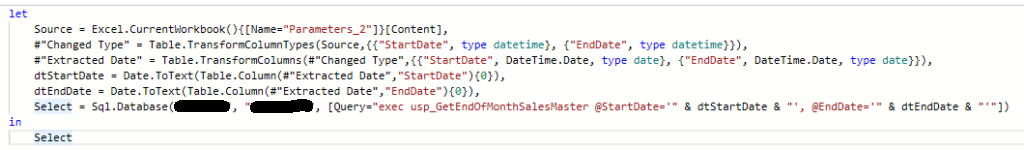

PowerQuery uses the Power Query M formula language to create the query in the Advanced Editor

In the my Query, I used the Advanced Editor. I started by selecting the Parameters table as a Source and then got to the data via the Table.TransformColumns command. Once I had the Extracted data, I set variables dtStartDate and dtEndDate via the Date.ToText function, as illustrated below so I could include them in the SQL Query.

Calling the stored procedure takes place in the Sql.Database connection command. After specifying the server and database name. In the options of the method, you can provide the Query text. This is where you can use the date variables.

This took me a while to figure out. I didn’t even know there was a PowerQuery M formula language to use so I had to try to understand how that worked. If anyone has a better way to do this, please provide comments so we can all learn from your expertise.

I received a reply asking for my contact information and later last night – I received a detailed email from

I received a reply asking for my contact information and later last night – I received a detailed email from